RAG

Retrieval-Augmented Generation (RAG) connects LLMs to external knowledge sources at inference time, enabling accurate, up-to-date answers without retraining. A core pattern in production AI systems.

4 articles

Towards Data Science· 1 min read· Yesterday

Hybrid Search and Re-Ranking in Production RAG

Towards Data Science· 1 min read· 4 days ago

RAG Is Blind to Time — I Built a Temporal Layer to Fix It in Production

Machine Learning Mastery· 1 min read· May 4, 2026

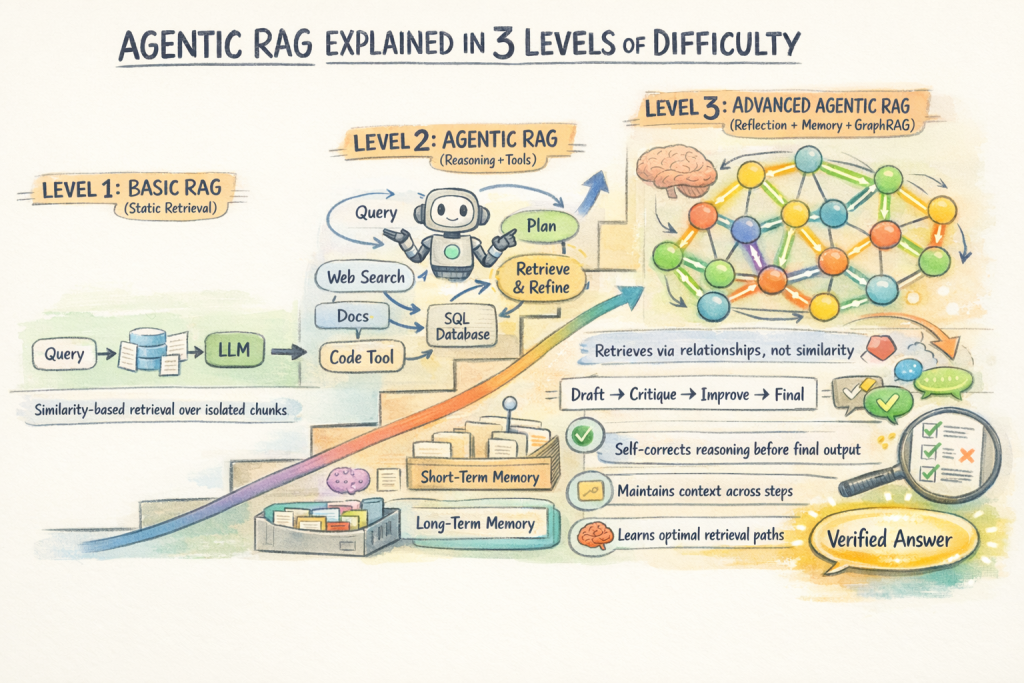

Agentic RAG Explained in 3 Levels of Difficulty

Machine Learning Mastery· 1 min read· Apr 30, 2026