AI News Hub: Latest Curated Updates for Engineers

Agentic AI 2026 – RAG, Enterprise Agents & Production Tools

Daily curated AI news and updates on agentic AI, RAG, enterprise agents, production tools, LLMs, scaling, governance and more.

Save engineers 2 hours per day by cutting through information overload.

Curated blog updates on agentic AI, RAG & production tools

Subscribe to Weekly AI Summary

CSPNet Paper Walkthrough: Just Better, No Tradeoffs

The CSPNet paper proposes a novel architecture that achieves state-of-the-art performance in image classification tasks without compromising on efficiency, making it a promising solution for real-world applications. This walkthrough provides a comprehensive review of the paper and a step-by-step PyTorch implementation of the CSPNet model.

Share on X

Inference Scaling (Test-Time Compute): Why Reasoning Models Raise Your Compute Bill

Reasoning models significantly increase token usage, latency, and infrastructure costs in production systems due to their complex inference processes. This article explains the reasons behind this increase and its implications for AI engineers.

Share on X

Which Regularizer Should You Actually Use? Lessons from 134,400 Simulations

This article presents a decision framework for choosing between Ridge, Lasso, and ElasticNet regularizers in machine learning models, based on three quantities that can be computed before fitting the model. The framework is derived from an analysis of 134,400 simulations, providing a data-driven approach to regularizer selection.

Share on X

How a 2021 Quantization Algorithm Quietly Outperforms Its 2026 Successor

A 2021 rotation-based vector quantization algorithm has been found to outperform its 2026 successor, highlighting the importance of revisiting and refining existing techniques in the field of AI engineering. This discovery underscores the potential for optimizing and improving upon established methods, rather than solely relying on newer approaches.

Share on X

Salesforce launches Agentforce Operations to fix the workflows breaking enterprise AI

Salesforce has introduced Agentforce Operations, a solution aimed at addressing the underlying workflow issues that hinder the effectiveness of enterprise AI systems. This new layer is designed to improve the reliability and scalability of AI-powered workflows, enabling organizations to push agents deeper into back-office systems.

Share on X

200,000 MCP servers expose a command execution flaw that Anthropic calls a feature

A critical command execution flaw was discovered in the Model Context Protocol (MCP), a widely adopted open standard for AI agent-to-tool communication, potentially exposing 200,000 servers to security risks. The flaw, described as a "feature" by Anthropic, highlights the importance of rigorous security testing and review in AI engineering.

Share on X

The Federal Data Paradox: Rich in Data, Poor in Access

The Federal Data Paradox highlights the disparity between the abundance of data within federal agencies and the limited access to this data, hindering effective decision-making and intelligence gathering. This paradox is a significant challenge for federal data modernization and cross-agency intelligence efforts.

Share on X

AWS Transform now automates BI migration to Amazon Quick in days

AWS Transform now automates Business Intelligence (BI) migration to Amazon QuickSight, reducing the time required for migration from days to a more efficient process. This automation enables organizations to unlock Amazon QuickSight capabilities and enhance their data consumption experience.

Share on X

The AI scaffolding layer is collapsing. LlamaIndex's CEO explains what survives.

The AI scaffolding layer, a set of tools and technologies required to develop and deploy Large Language Model (LLM) applications, is undergoing a significant shift as indexing layers, query engines, and retrieval pipelines become less necessary. According to LlamaIndex's CEO, this collapse is not a problem, but rather a natural progression of the field.

Share on X

xAI launches Grok 4.3 at an aggressively low price and a new, fast, powerful voice cloning suite

xAI has launched Grok 4.3, a new large language model, at an aggressively low price, along with a fast and powerful voice cloning suite. This move is part of xAI's efforts to compete with OpenAI, as the company's founder, Elon Musk, faces off against OpenAI's co-founder, Sam Altman, in court.

Share on X

How to Get Hired in the AI Era

In the AI era, hiring managers look for junior candidates who demonstrate a combination of technical skills, soft skills, and adaptability to learn and grow with the company. To stand out, candidates should focus on developing a strong foundation in AI and machine learning concepts, as well as showcasing their ability to work collaboratively and think critically.

Share on X

Churn Without Fragmentation: How a Party-Label Bug Reversed My Headline Finding

This article presents a data quality case study from English local elections, highlighting the importance of categorical normalization and metric validation in avoiding analytical group fragmentation. The study demonstrates how a party-label bug led to incorrect headline findings, emphasizing the need to rely on raw labels.

Share on X

Ghost: A Database for Our Times?

Ghost is a novel database designed specifically for AI agents, addressing the limitations of traditional databases in handling complex AI workloads. This database aims to provide a scalable and efficient solution for AI applications, enabling them to process and store vast amounts of data.

Share on X

Hidden IT problems are quietly creating risk, shadow IT, and lost productivity

A global survey of 4,200 managers and employees reveals that most digital dysfunction goes unreported, resulting in employees working around IT issues, creating shadow IT, and lost productivity. This hidden IT problem poses a significant risk to organizations, highlighting the need for improved IT support and employee engagement.

Share on X

Model Risk Governance Is Not the Same as Risk Intelligence

Model risk governance and risk intelligence are two distinct concepts in the context of financial services, with model risk governance focusing on regulatory compliance and risk intelligence focusing on proactive risk assessment and mitigation. Effective model risk governance requires a combination of both concepts to ensure accurate and reliable risk assessments.

Share on X

Why Powerful Machine Learning Is Deceptively Easy

Or why what appears powerful can be methodologically fragile The post Why Powerful Machine Learning Is Deceptively Easy appeared first on Towards Data Science ....

Share on X

Alibaba's Metis agent cuts redundant AI tool calls from 98% to 2% — and gets more accurate doing it

One of the key challenges of building effective AI agents is teaching them to choose between using external tools or relying on their internal knowledge. But large language models are often trained to blindly invoke tools, which causes latency bottlenecks, unnecessary API costs, and degraded reasoni...

Share on X

Reinforcement fine-tuning with LLM-as-a-judge

In this post, we take a deeper look at how RLAIF or RL with LLM-as-a-judge works with Amazon Nova models effectively....

Share on X

One tool call to rule them all? New open source Python tool Runpod Flash eliminates containers for faster AI dev

Runpod , the high-performance cloud computing and GPU platform designed specifically for AI development, today launched a new open source, MIT licensed, enterprise-friendly Python programming tool called Runpod Flash — and it is poised to make creation, iteration and deployment of AI systems inside ...

Share on X

AWS Generative AI Model Agility Solution: A comprehensive guide to migrating LLMs for generative AI production

In this post, we introduce a systematic framework for LLM migration or upgrade in generative AI production, encompassing essential tools, methodologies, and best practices. The framework facilitates transitions between different LLMs by providing robust protocols for prompt conversion and optimizati...

Share on X

Sun Finance automates ID extraction and fraud detection with generative AI on AWS

In this post, we show how Sun Finance used Amazon Bedrock, Amazon Textract, and Amazon Rekognition to build an AI-powered identity verification (IDV) pipeline. The solution improved extraction accuracy from 79.7% to 90.8%, cut per-document costs by 91%, and reduced processing time from up to 20 hour...

Share on X

Unleashing Agentic AI Analytics on Amazon SageMaker with Amazon Athena and Amazon Quick

This post demonstrates how agentic AI assistant from Amazon Quick transform data analytics into a self-service capability by using Amazon Simple Storage Service (Amazon S3) as a storage, Amazon SageMaker and AWS Glue for lakehouse, Amazon Athena for serverless SQL querying across multiple storage fo...

Share on X

Configuring Amazon Bedrock AgentCore Gateway for secure access to private resources

In this post, you will configure Amazon Bedrock AgentCore Gateway to access private endpoints using Resource Gateway, a managed construct that provisions Elastic Network Interfaces (ENIs) directly inside your Amazon VPC, one per subnet. You will explore two implementation modes (managed and self-man...

Share on X

Proxy-Pointer RAG: Multimodal Answers Without Multimodal Embeddings

Structure is all you need The post Proxy-Pointer RAG: Multimodal Answers Without Multimodal Embeddings appeared first on Towards Data Science ....

Share on X

How to Study the Monotonicity and Stability of Variables in a Scoring Model using Python

How can you validate that your variables tell a consistent risk? The post How to Study the Monotonicity and Stability of Variables in a Scoring Model using Python appeared first on Towards Data Science ....

Share on X

Effective KV Compression with TurboQuant

TurboQuant has recently been launched by Google as a novel algorithmic suite and library for applying advanced quantization and compression to large language models (LLMs) and vector search engines — an indispensable element of RAG systems....

Share on X

Why AI Engineers Are Moving Beyond LangChain to Native Agent Architectures

Frameworks accelerated the first wave of LLM apps, but production demands a different architecture. The post Why AI Engineers Are Moving Beyond LangChain to Native Agent Architectures appeared first on Towards Data Science ....

Share on X

Why Your OEE Dashboard Is Lying to You

USE CASEOverall Equipment Effectiveness & Production IntelligenceIn most manufacturing plants......

Share on X

Organizing Agents’ memory at scale: Namespace design patterns in AgentCore Memory

In this post, you will learn how to design namespace hierarchies, choose the right retrieval patterns, and implement AWS Identity and Access Management (IAM)-based access control for AgentCore Memory....

Share on X

AI evals are becoming the new compute bottleneck

...

Share on X

4 YAML Files Instead of PySpark: How We Let Analysts Build Data Pipelines Without Engineers

How we replaced Python pipelines with dlt, dbt, and Trino — and cut delivery time from weeks to one day. The post 4 YAML Files Instead of PySpark: How We Let Analysts Build Data Pipelines Without Engineers appeared first on Towards Data Science ....

Share on X

Building AI Agents in Python with Pydantic AI

<a href="https://machinelearningmastery....

Share on X

Run custom MCP proxies serverless on Amazon Bedrock AgentCore Runtime

This post shows you how to deploy a serverless MCP proxy on Amazon Bedrock AgentCore Runtime that gives you a programmable layer to implement proper governance, controls, and observability aligned with an organization's security policies....

Share on X

NVIDIA Nemotron 3 Nano Omni model now available on Amazon SageMaker JumpStart

Today, we are excited to announce the day zero availability of NVIDIA Nemotron 3 Nano Omni on Amazon SageMaker JumpStart. In this post, we walk through the model architecture and key capabilities of Nemotron 3 Nano Omni, explore the enterprise use cases it unlocks, and show you how to deploy and run...

Share on X

The Next Frontier of AI in Production Is Chaos Engineering

Blast-radius control tells you how much to break. Intent tells you what breaking it will teach. Only one of these has mature tooling. The post The Next Frontier of AI in Production Is Chaos Engineering appeared first on Towards Data Science ....

Share on X

Build and deploy an automatic sync solution for Amazon Bedrock Knowledge Bases

In this post, we explore an automated solution that detects S3 events and triggers ingestion jobs while respecting service quotas and providing comprehensive monitoring. This serverless solution uses an event-driven architecture to keep your knowledge base current without overwhelming the Amazon Bed...

Share on X

Build Strands Agents with SageMaker AI models and MLflow

In this post, we demonstrate how to build AI agents using Strands Agents SDK with models deployed on SageMaker AI endpoints. You will learn how to deploy foundation models from SageMaker JumpStart, integrate them with Strands Agents, and establish production-grade observability using SageMaker Serve...

Share on X

How Popsa used Amazon Nova to inspire customers with personalised title suggestions

In this post, we share how we applied Amazon Bedrock and the Amazon Nova family of models to reimagine our Title Suggestion feature. By combining metadata, computer vision, and retrieval-augmented generative AI, we now automatically generate creative, brand-aligned titles and subtitles across 12 lan...

Share on X

Text Summarization with Scikit-LLM

In a <a href="https://machinelearningmastery....

Share on X

Building Workforce AI Agents with Visier and Amazon Quick

In this post, we show how connecting the Visier Workforce AI platform with Amazon Quick through Model Context Protocol (MCP) gives every knowledge worker a unified agentic workspace to ask questions in. Visier helps ground the workspace in live workforce data and the organizational context that surr...

Share on XCost-effective multilingual audio transcription at scale with Parakeet-TDT and AWS Batch

In this post, we walk through building a scalable, event-driven transcription pipeline that automatically processes audio files uploaded to Amazon Simple Storage Service (Amazon S3), and show you how to use Amazon EC2 Spot Instances and buffered streaming inference to further reduce costs....

Share on X

NVIDIA and Google Cloud Collaborate to Advance Agentic and Physical AI

NVIDIA and Google Cloud have collaborated for more than a decade, co‑engineering a full‑stack AI platform that spans every technology layer — from performance‑optimized libraries and frameworks to enterprise‑grade cloud services. This foundation enables developers, startups and enterprises to push a...

Share on X

AI Agent Memory Explained in 3 Levels of Difficulty

A stateless AI agent has no memory of previous calls....

Share on X

QIMMA قِمّة ⛰: A Quality-First Arabic LLM Leaderboard

...

Share on X

Autonomous AI at Scale: Adobe Agents Unlock Breakthrough Creative Intelligence With NVIDIA and WPP

AI agents are transforming how work gets done across all industries, accelerating everything from content creation to decision-making. NVIDIA’s expanded strategic collaborations with Adobe and WPP are bringing agentic AI to the center of enterprise marketing operations across creative production and...

Share on X

NVIDIA and Partners Showcase the Future of AI-Driven Manufacturing at Hannover Messe 2026

Manufacturing is at an inflection point. Across every major industrial economy, the pressure to do more with less — due to faster design cycles, leaner operations and strain on skilled labor pools — is accelerating the shift to AI-driven production. The question is no longer whether to adopt AI, but...

Share on X

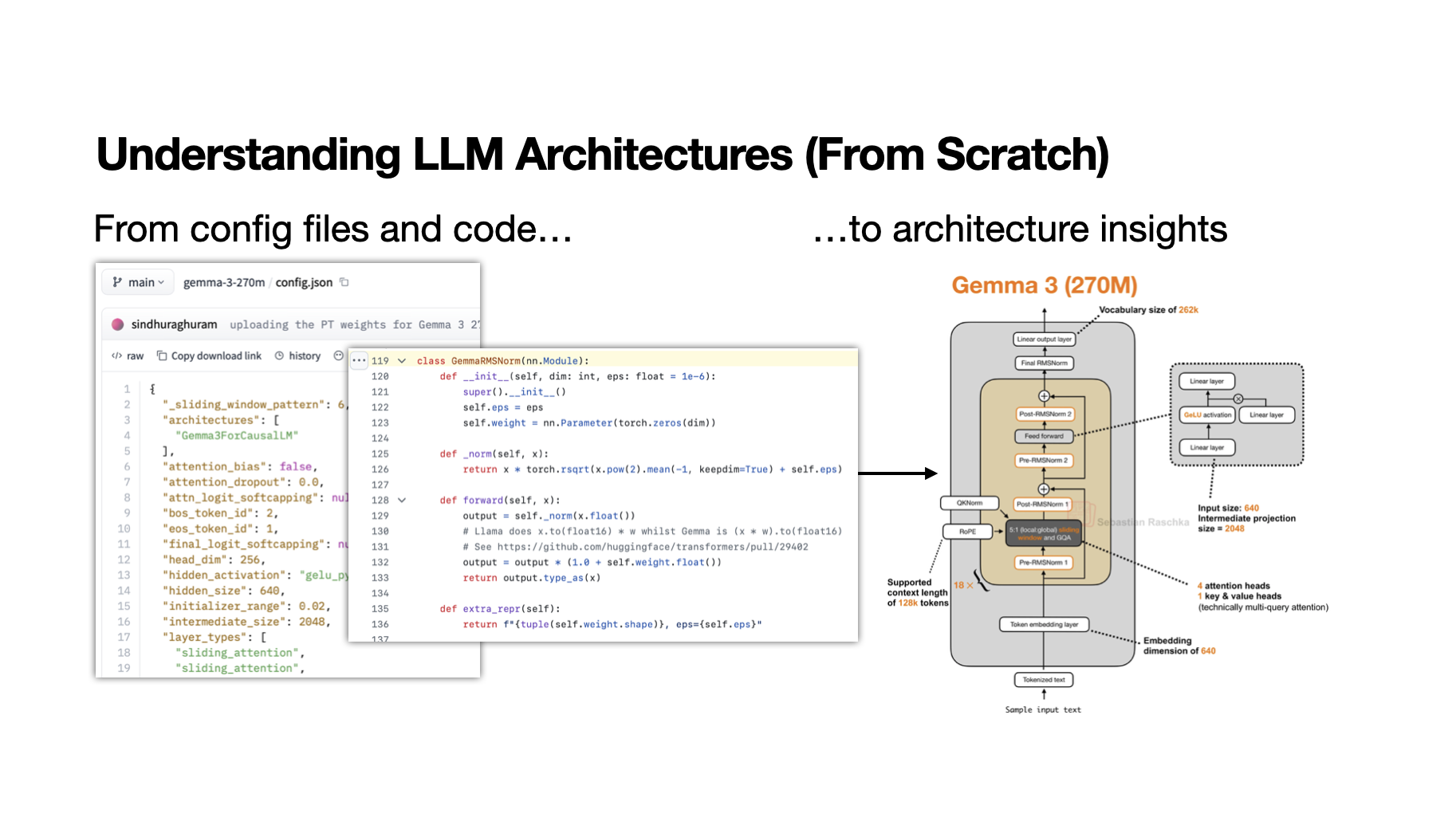

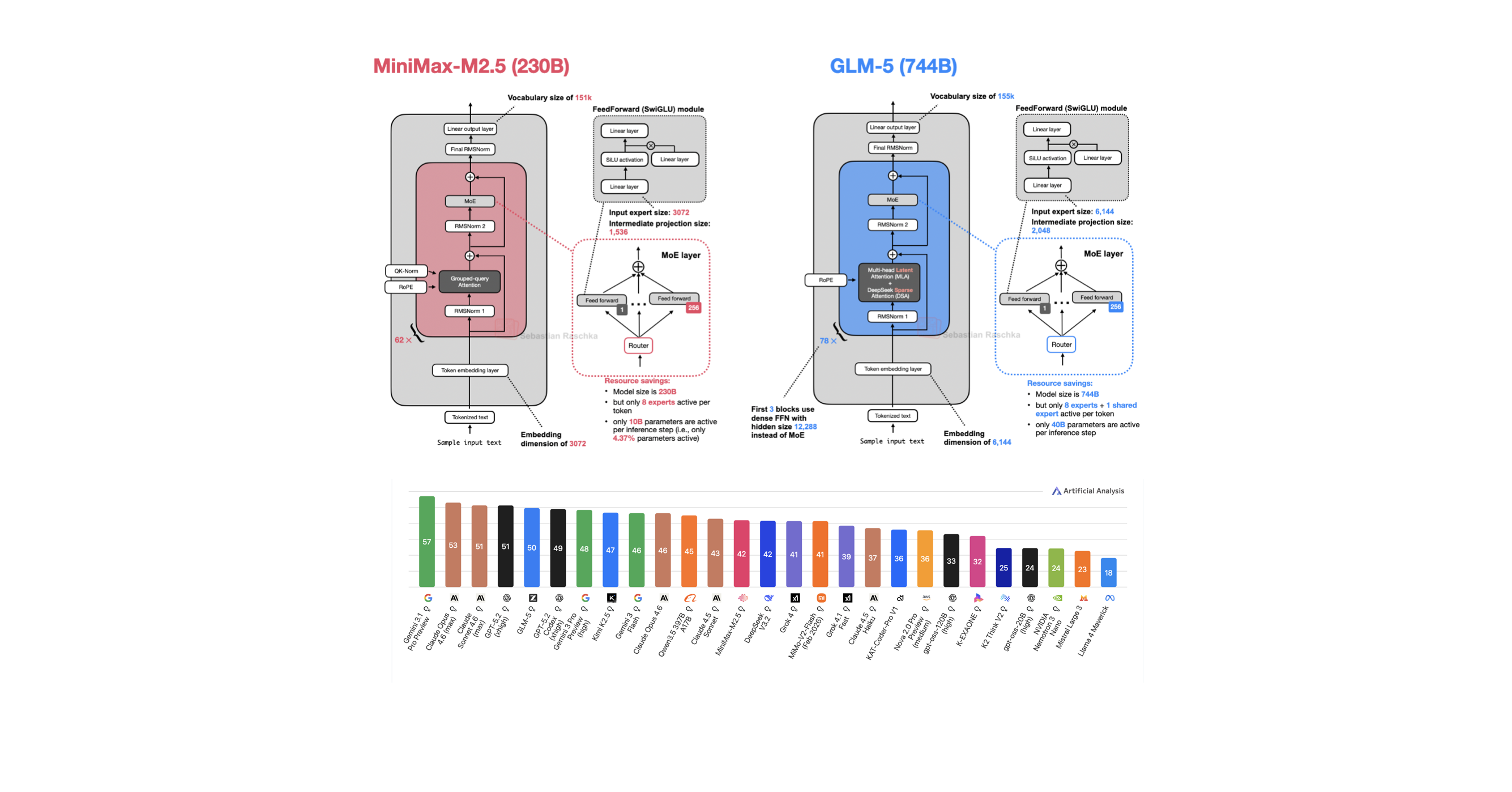

My Workflow for Understanding LLM Architectures

A learning-oriented workflow for understanding new open-weight model releases...

Share on X

The Complete Guide to Inference Caching in LLMs

Calling a large language model API at scale is expensive and slow....

Share on X

No Need for Space Gear — Capcom’s ‘PRAGMATA’ Joins GeForce NOW on Launch Day

Head straight for orbit with GeForce NOW — no space helmet required. PRAGMATA, Capcom’s long-awaited sci-fi action adventure, touches down on GeForce NOW the same day it launches worldwide. The futuristic journey through a cold lunar station in the near future can be streamed instantly from the clou...

Share on X

Python Decorators for Production Machine Learning Engineering

You've probably written a decorator or two in your Python career....

Share on X

Zoom Perspectives: Why ‘agentic’ work is the new enterprise standard

I had been waiting for the 2026 edition of Zoom Communications Inc.‘s Perspectives, its recently held annual get-together for industry analysts, because I find Zoom to be the most interesting vendor in the communications business today. Though it has many competitors, its product roadmap has b...

Share on X

Cloudflare expands Agent Cloud with new tools to build and scale AI agents

Cloudflare Inc. today announced an expansion of its Agent Cloud with new features that are designed to help developers build, deploy and scale agents. The new release includes a suite of infrastructure, security and developer tools that help to move AI agents from experimental demos on local laptops...

Share on X

Commvault rolls out AI capabilities to secure agentic workflows and data

Data protection provider Commvault Systems Inc. today announced new artificial intelligence capabilities that it says will allow organizations to accelerate AI adoption with confidence while maintaining control over data, agents and recovery. The new capabilities allow enterprises to activate AI saf...

Share on X

CoreWeave inks multiyear cloud deal with Anthropic

CoreWeave Inc. today announced that it has won a multiyear contract to supply Anthropic PBC with cloud infrastructure. The company’s shares closed 11% higher on the news. The data center capacity commissioned by Anthropic developer will start coming online later this year. CoreWeave said the i...

Share on X

HPE accelerates quantum readiness ahead of Q-day

Enterprise tech is already preparing for “Q-day” — when quantum computing will be able to break today’s public-key cryptography. Although that date is still years away, staying ahead of the curve is a necessity if companies want to secure their infrastructure and incorporate quantum into the existin...

Share on X

Anthropic tries to keep its new AI model away from cyberattackers as enterprises look to tame AI chaos

Sure, at some point quantum computing may break data encryption — but well before that, artificial intelligence models already seem likely to wreak havoc. That became starkly apparent this week when Anthropic announced a new model called Claude Mythos that it says is so good at uncovering cybe...

Share on X

Anthropic and OpenAI target big businesses with enterprise-grade controls and lower pricing

Artificial intelligence leaders Anthropic PBC and OpenAI Group PBC are stepping up their efforts to compete for the enterprise, making their most advanced agentic tools more accessible to the largest organizations. In its update today, Anthropic revealed new “organization-wide controls” to help corp...

Share on X

Yobi teams with Microsoft to deliver predictive consumer intelligence on Azure

Behavioral artificial intelligence company Yobi Ventures Inc. today announced a strategic partnership with Microsoft Corp. to unlock predictive consumer intelligence for U.S. enterprises. The partnership with Microsoft sees Yobi integrate its behavioral intelligence models with Azure infrastructure ...

Share on X

Is a backlash brewing? Rapid innovation in AI coding and agents may force push for enterprise order and control

Artificial intelligence is proving to be a big bet for many companies across the enterprise landscape, and the gamblers are getting nervous. A survey of 2,400 global employees and C-suite leaders released this week by the agentic AI platform Writer Inc. found that 79% of executives acknowledge strug...

Share on X

Tether launches open-source on-device AI framework for developers

Tether Operations S.A. de C.V., a company best known for operating and issuing the cryptocurrency of the same name, today announced the release of a fully open-source framework for developers to build artificial intelligence features directly onto devices and platforms. The framework is called the Q...

Share on X

The race to deploy AI agents is exposing a critical gap in enterprise data management

As AI agents demand real-time access to live, governed data, database lifecycle management has moved from a back-office task to a strategic imperative for enterprise infrastructure. The complexity of modern environments means developers can no longer afford to manage fragmented data silos manually, ...

Share on X

AWS previews a cloud-agnostic registry for managing agentic fleets at scale

Amazon Web Services Inc. is trying to make sure enterprises that embrace artificial intelligent agents and automation aren’t getting lost in the curse of “agentic sprawl.” The cloud computing giant is trying to prevent that from happening with the launch of AWS Agent Registry in preview today. It’s ...

Share on X

NetApp and Nutanix say storage has become the last line of defense in the AI era

Companies are rethinking their technology foundations as AI infrastructure modernization and security demands grow. The result is surging demand for flexible platforms that can run legacy and modern applications simultaneously while keeping data secure and AI-ready. NetApp Inc. and Nutanix Inc. are ...

Share on X

Blaize launches AI Services platform to move enterprise AI from pilot to production

Artificial intelligence computing company Blaize Holdings Inc. today announced the launch of Blaize AI Services, a new platform designed to help AI infrastructure providers and enterprises deploy production-ready, application-level AI services without building the underlying AI stack from scratch. M...

Share on X

ServiceNow says it’s ‘AI-enabling’ its entire product suite to turbocharge enterprise automation

ServiceNow Inc. today announced a sweeping overhaul of its entire product lineup, saying that every single one of its services, platforms and products has been “AI-enabled” to enhance agentic automation in the enterprise. The company said it’s trying to push enterprises beyond the experimental stage...

Share on X

Zencoder launches AI platform to automate the surrounding work that coding agents don’t handle

Zencoder, a startup building an artificial intelligence orchestration layer for software development and business, today announced the launch of Zenflow Work, a major expansion of its platform that focuses on automating everyday business — the work that coding agents don’t touch. The com...

Share on X

New technique makes AI models leaner and faster while they’re still learning

Researchers use control theory to shed unnecessary complexity from AI models during training, cutting compute costs without sacrificing performance....

Share on X

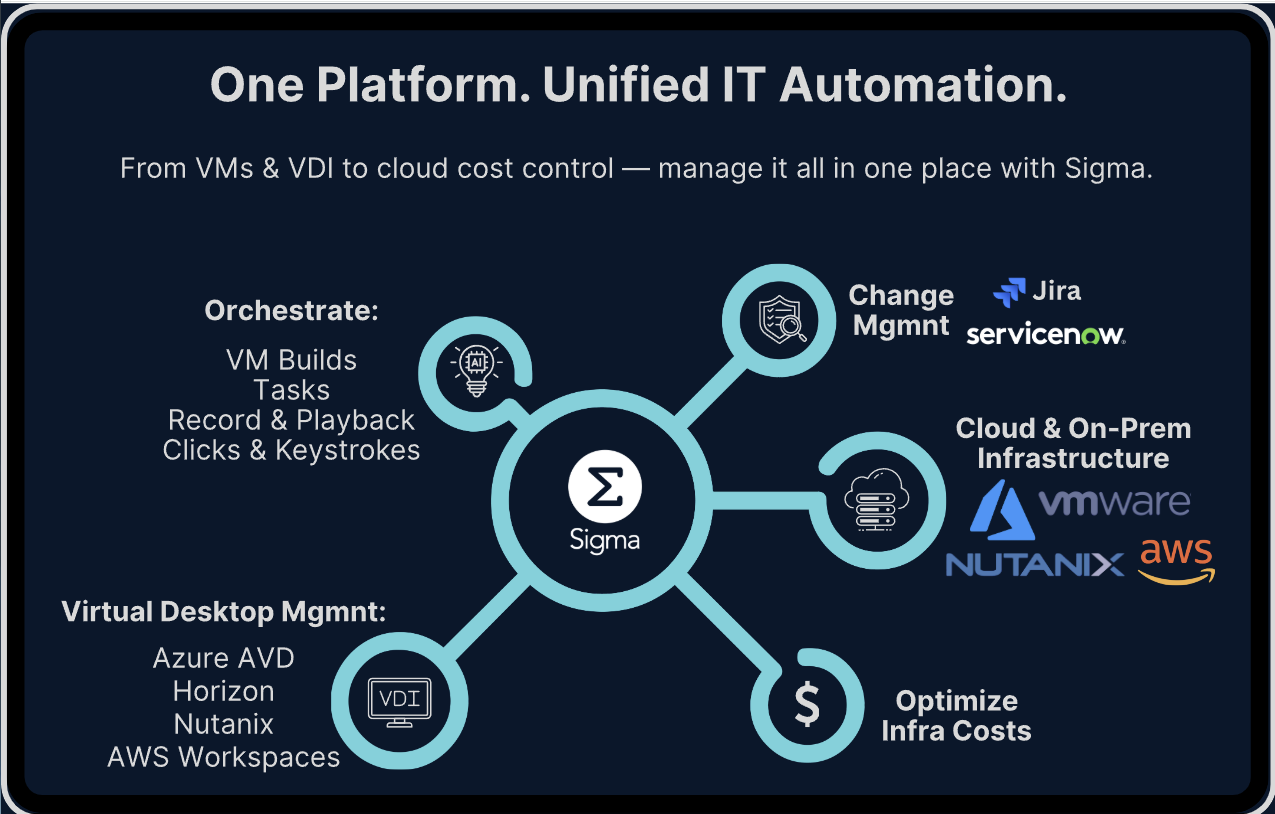

Sigma Automate emerges with $2.75M to tackle enterprise IT complexity with no-code automation

Sigma Automate Inc., a startup providing enterprises with no-code information technology automation, formally launched today with $2.75 million in funding to scale up its platform and expand its ability to simplify IT operations using. Sigma Automate offers an artificial intelligence-native platform...

Share on X

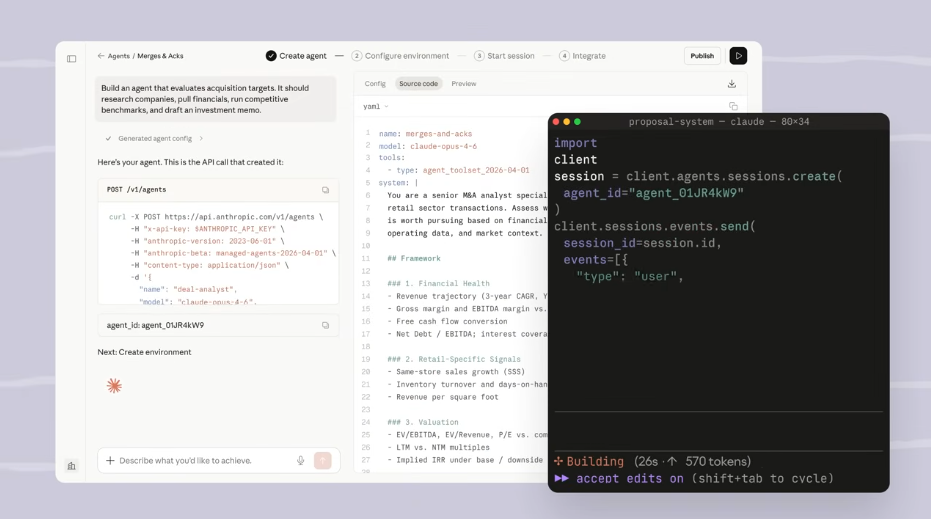

Anthropic launches Claude Managed Agents to speed up AI agent development

Anthropic PBC today launched Claude Managed Agents, a cloud service that customers can use to build artificial intelligence agents. The company says the offering shortens the development workflow from months to weeks. Deploying a production-grade agent requires software teams to build not only the a...

Share on X

Today’s applications, tomorrow’s AI workloads: Nutanix is building the platform for both, says CEO

The enterprise computing stack is undergoing its most consequential transformation in decades, as agentic infrastructure shifts from an experimental workload to the organizing logic of every new application. That shift is forcing a fundamental rethink of what infrastructure must actually do — not ju...

Share on X

Helping data centers deliver higher performance with less hardware

Researchers developed a system that intelligently balances workloads to improve the efficiency of flash storage hardware in a data center....

Share on X

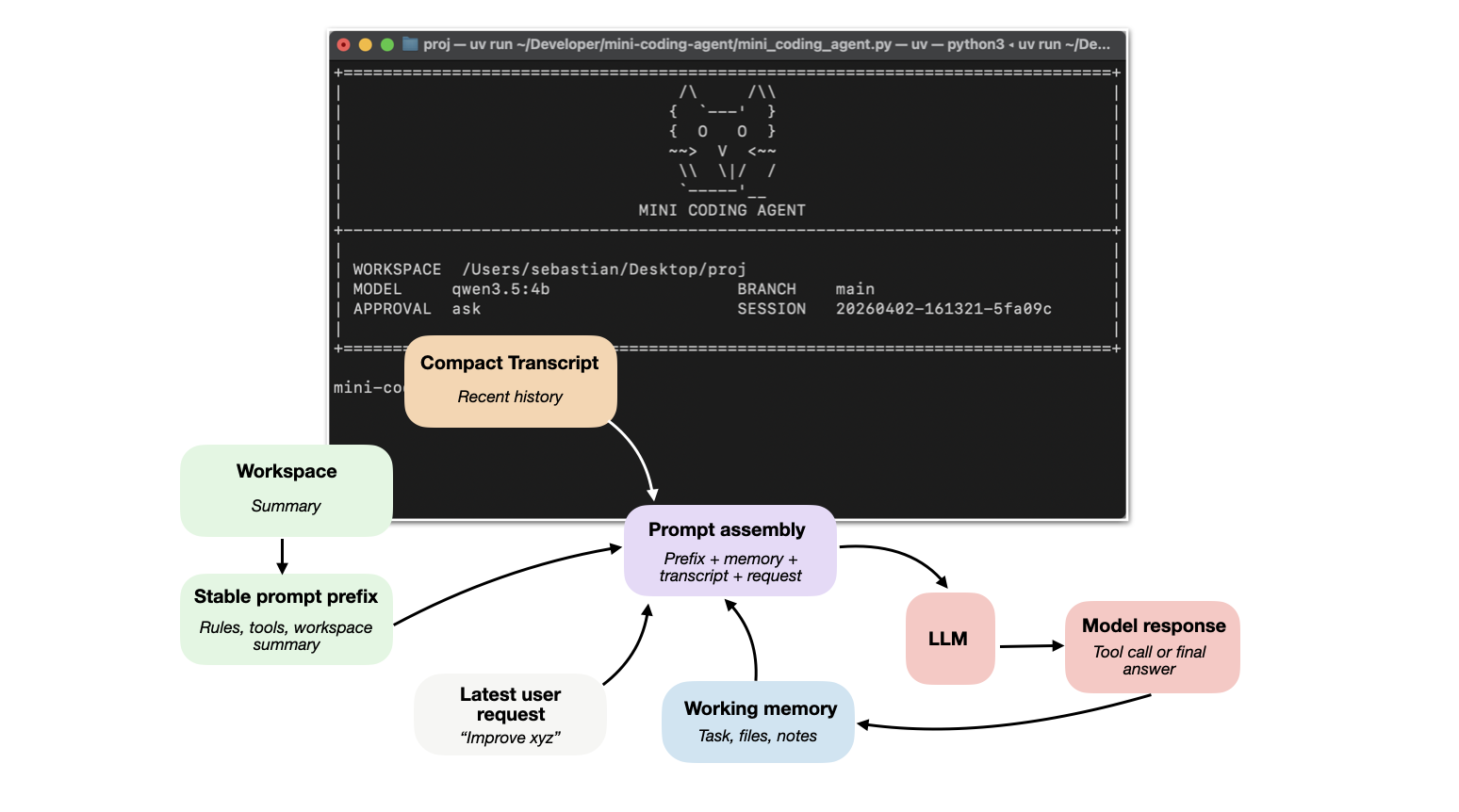

Components of A Coding Agent

How coding agents use tools, memory, and repo context to make LLMs work better in practice...

Share on X

Press Start on April: GeForce NOW Brings 10 Games to the Cloud

No joke — GFN Thursday is skipping the tricks and heading straight into the games. April kicks off with ten new titles, bringing fresh adventures to GeForce NOW, including the launch of Capcom’s highly anticipated PRAGMATA. A dozen new games are available to stream this week, including Arknights: En...

Share on X

Granite 4.0 3B Vision: Compact Multimodal Intelligence for Enterprise Documents

...

Share on X

MIT researchers use AI to uncover atomic defects in materials

A new model measures defects that can be leveraged to improve materials’ mechanical strength, heat transfer, and energy-conversion efficiency....

Share on X

Augmenting citizen science with computer vision for fish monitoring

MIT Sea Grant works with the Woodwell Climate Research Center and other collaborators to demonstrate a deep learning-based system for fish monitoring....

Share on X

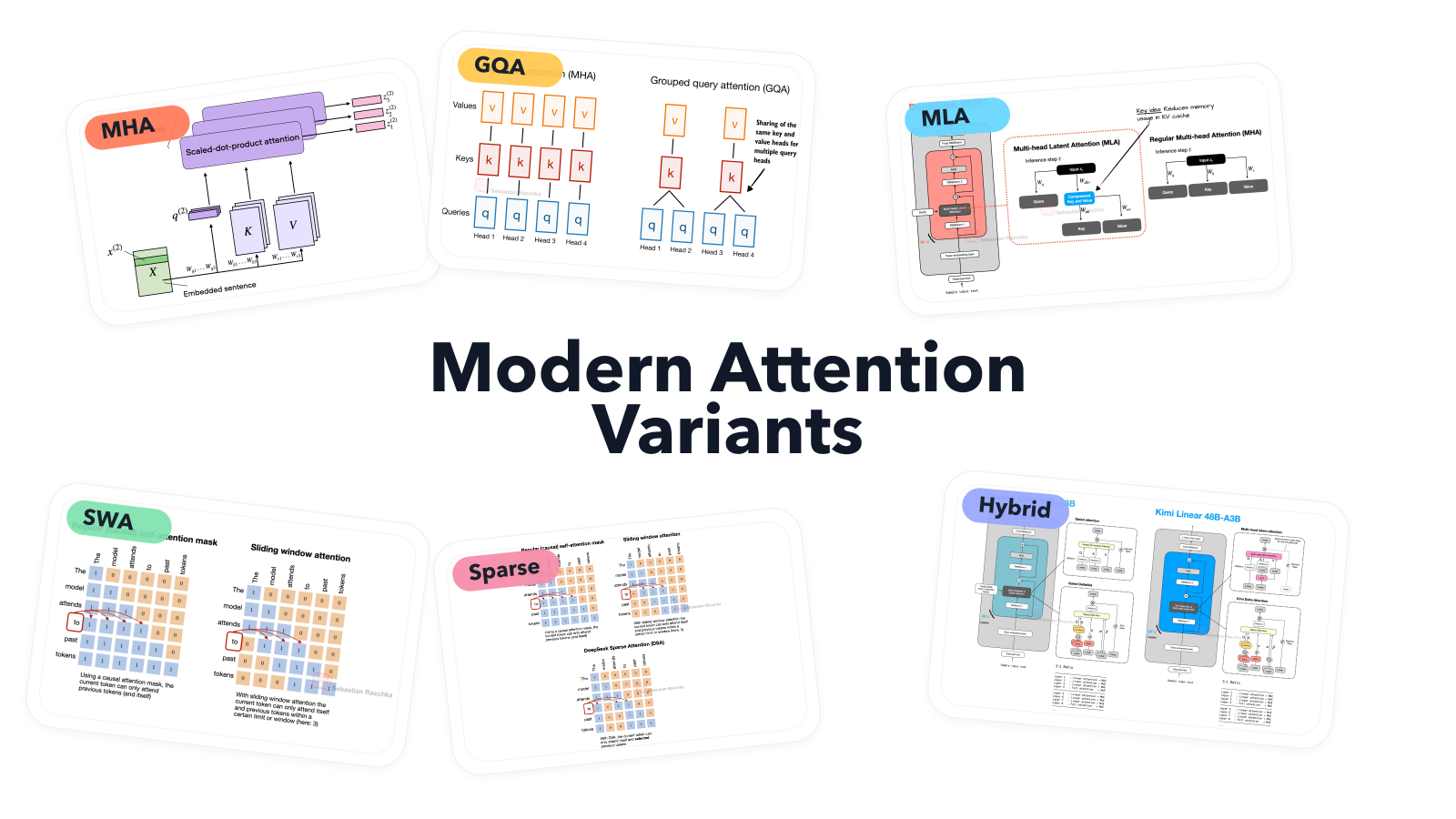

A Visual Guide to Attention Variants in Modern LLMs

From MHA and GQA to MLA, sparse attention, and hybrid architectures...

Share on X

Generative AI improves a wireless vision system that sees through obstructions

With this new technique, a robot could more accurately detect hidden objects or understand an indoor scene using reflected Wi-Fi signals....

Share on X

Holotron-12B - High Throughput Computer Use Agent

...

Share on X

New MIT class uses anthropology to improve chatbots

MIT computer science students design AI chatbots to help young users become more social, and socially confident....

Share on X

Introducing Storage Buckets on the Hugging Face Hub

...

Share on X

New method could increase LLM training efficiency

By leveraging idle computing time, researchers can double the speed of model training while preserving accuracy....

Share on X

A Dream of Spring for Open-Weight LLMs: 10 Architectures from Jan-Feb 2026

A Round Up And Comparison of 10 Open-Weight LLM Releases in Spring 2026...

Share on X