Ahead of AI

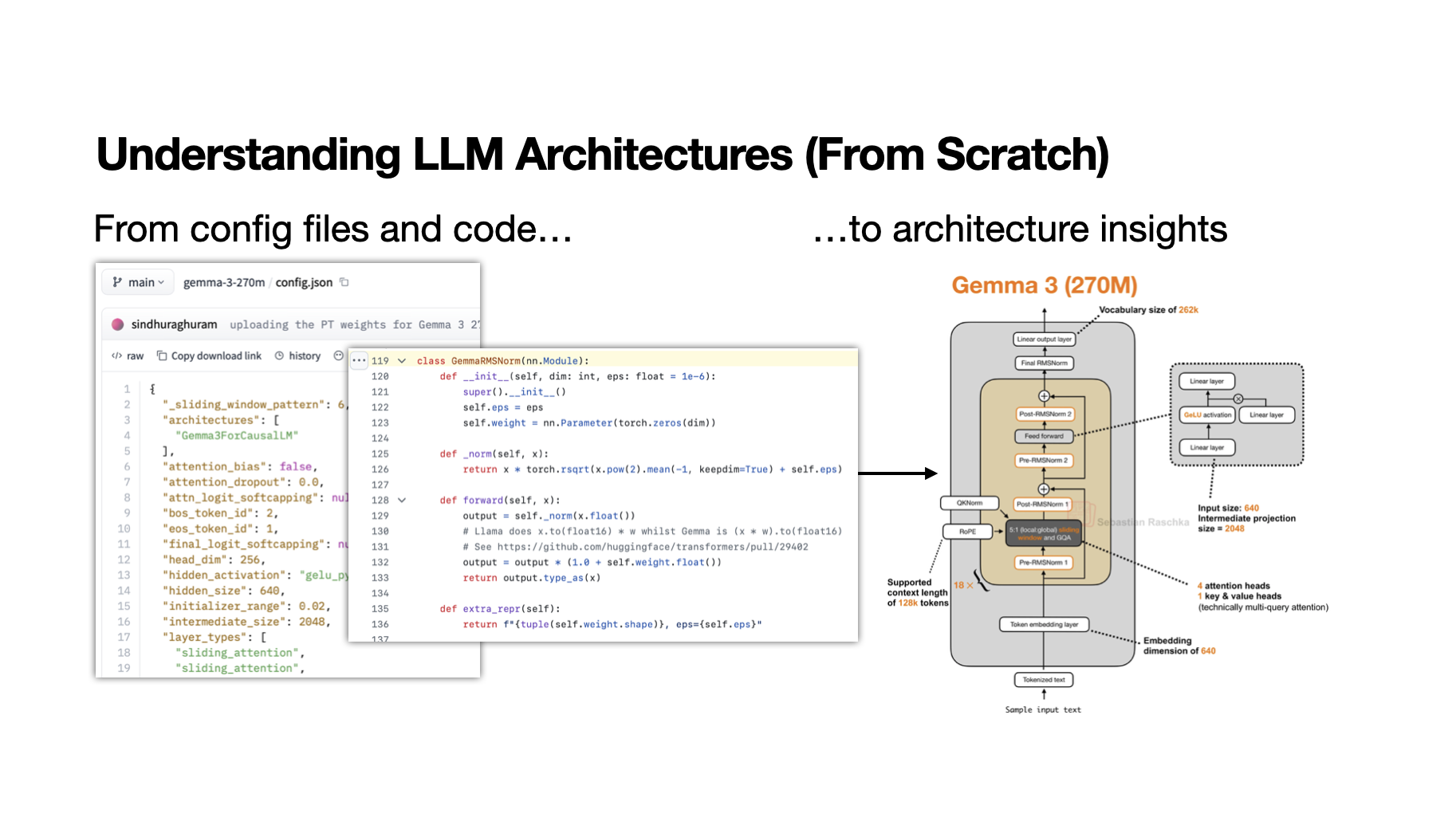

My Workflow for Understanding LLM Architectures

•1 min read•

#llm#deployment#rag#langchain

Level:Intermediate

For:ML Engineers, NLP Researchers, AI Model Developers

✦TL;DR

This article presents a structured workflow for understanding Large Language Model (LLM) architectures, particularly when encountering new open-weight model releases, to facilitate effective learning and analysis. By following this workflow, AI engineers can systematically dissect and comprehend the complexities of LLMs, enabling them to leverage these models more efficiently in their applications.

⚡ Key Takeaways

- The workflow involves initial model exploration to understand its capabilities and limitations.

- It includes a step for analyzing the model's architecture, focusing on its components and how they interact.

- The workflow also emphasizes the importance of experimenting with the model on various tasks to gain practical insights into its performance and potential applications.

Want the full story? Read the original article.

Read on Ahead of AI ↗Share this summary

More like this

AI Agents Need Their Own Desk, and Git Worktrees Give Them One

Towards Data Science•#agentic workflows

How to Learn Python for Data Science Fast in 2026 (Without Wasting Time)

Towards Data Science•#python

Introducing granular cost attribution for Amazon Bedrock

AWS ML Blog•#bedrock

Power video semantic search with Amazon Nova Multimodal Embeddings

AWS ML Blog•#bedrock