Ahead of AI

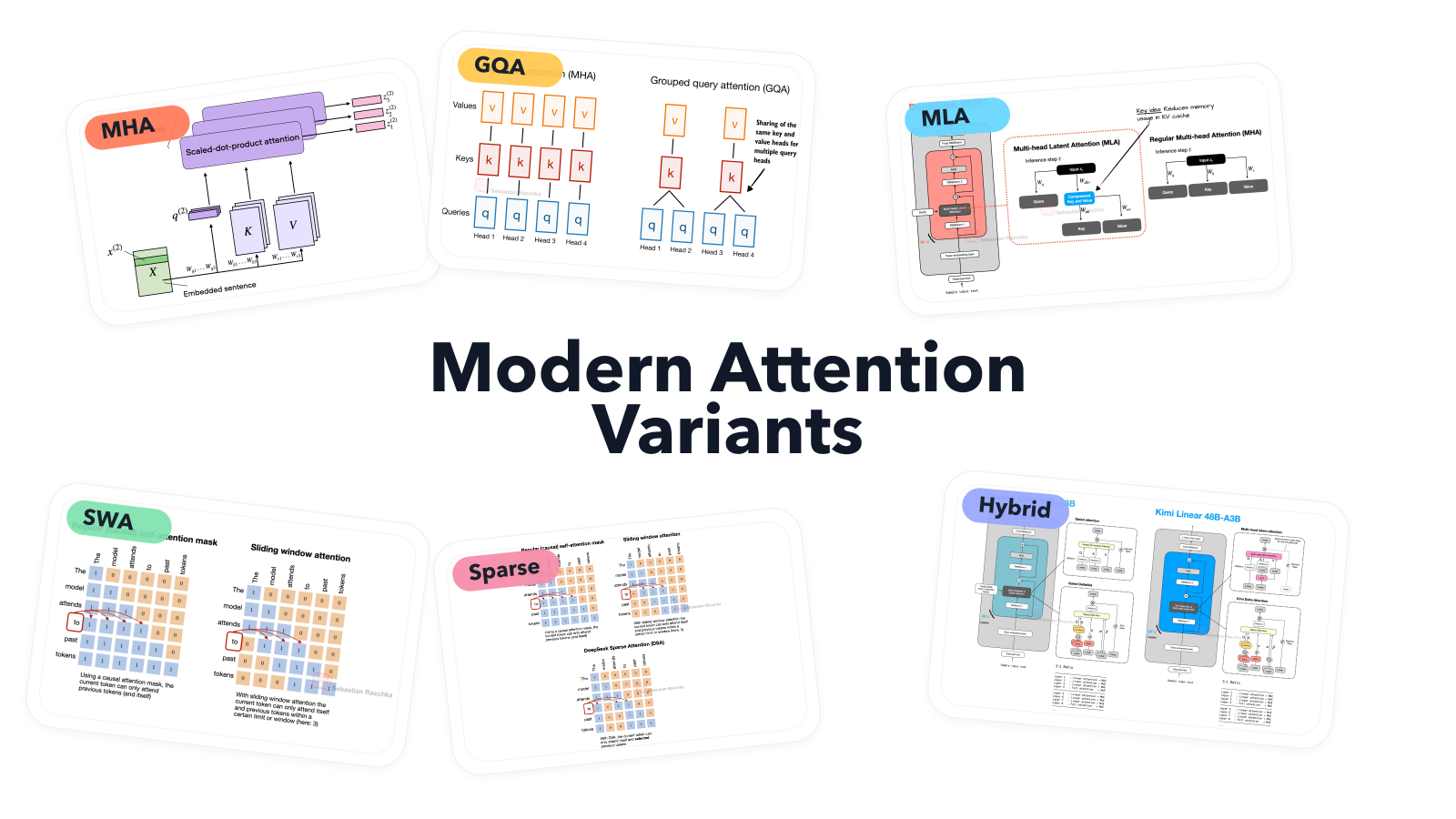

A Visual Guide to Attention Variants in Modern LLMs

✦TL;DR

From MHA and GQA to MLA, sparse attention, and hybrid architectures...

Want the full story? Read the original article.

Read on Ahead of AI ↗Share this summary

More like this

The Rise of Sports Intelligence: How the Lakehouse Turns Tracking Data into Competitive Advantage

Databricks Blog•#llm

Perceptron Mk1 shocks with highly performant video analysis AI model 80-90% cheaper than Anthropic, OpenAI & Google

VentureBeat AI•#llm

From Vibe Coding to Spec-Driven Development

Towards Data Science•#llm

Navigating EU AI Act requirements for LLM fine-tuning on Amazon SageMaker AI

AWS ML Blog•#llm